Research Engineering OS

Compress rework into standards + templates + checklists

Author: Li Hongmin (李鸿敏) The University of Tokyo, Computational Biology and Medical Sciences

About This Book

This is not a book that teaches you “how to write code,” but a book that teaches you how to manage research code.

Target readers:

- AI/ML researchers

- Computational biology researchers

- AI for Science

Core ideas:

- Experiments are the unit (not code files)

- Exploration can be messy, but outputs must be cleanable

- Conclusions can be temporarily fragile, but the chain of evidence must be solid

Read Online

This book is fully open source and can be read online for free. If you find it helpful, you’re welcome to:

- ⭐ Star it on GitHub

- 📖 Buy a paperback/Kindle edition to keep

- 💬 Share feedback and suggestions

Version Information

- Online version: continuously updated, includes the latest content

- Print edition: v1.0, first published in February 2026

Start Reading

Start from the Preface, or jump directly to:

- Why It Always Blows Up at the End - understand the root cause

- Experiments Are the Unit - core concept

- Repository Structure - practical guide

Contact

- Email: lihongmin@edu.k.u-tokyo.ac.jp

- Website: li-hongmin.github.io

© 2026 Li Hongmin. All rights reserved.

Preface

The AI era has further blurred the boundary between research and development: from formulating hypotheses, building prototypes, and running experiments, to solidifying results into a reproducible chain of evidence—all of this is now completed within shorter cycles. Meanwhile, AI coding assistants have made it easier than ever to “write code that runs,” yet they have also made it harder to “write research code that is trustworthy, traceable, and reproducible.”

I wrote Research Engineering OS not to offer yet another abstract “methodology,” but to compress the pitfalls I have repeatedly encountered in academic machine learning / computational biology research into a set of executable default behaviors: use standards to reduce rework, use templates to lower collaboration costs, and use checklists to proactively absorb, within the daily rhythm, risks that would otherwise explode only at the final stage.

The central thesis of this short book is straightforward: exploration can be wild, but outputs must be cleanable; conclusions may be temporarily fragile, but the chain of evidence must be solid. You may iterate quickly, but you must leave enough information for every “apparently effective” result so that it can still be reproduced, questioned, and validated a week later, a month later, or on a different machine.

Accordingly, this book will repeatedly emphasize three things:

-

Experiments are the minimal unit: what you record is not “which code was changed,” but “which versions and configurations constitute this experiment.”

-

Default automatic traceability: make run_id, commit, config, data versions, and environment summaries part of the pipeline.

-

Front-load DoD and checklists: decompose the rigor demanded at the paper-writing stage into small actions executable in everyday work.

- If the work you are doing belongs to “research development” in the AI era—where you must maintain exploratory speed, remain accountable for results, and communicate efficiently with collaborators—I hope this book can serve as a minimal operating system beside your desk.

-

:: flushright Li Hongmin (李鸿敏)

Department of Computational Biology and Medical Sciences

Graduate School of Frontier Sciences, The University of Tokyo

5-1-5 Kashiwanoha, Kashiwa-shi, Chiba 277-8561, Japanli-hongmin.github.iolihongmin@edu.k.u-tokyo.ac.jp -

::

Why Do You Always Overturn Everything at the End (Exploration Debt / Validation Debt / Reproducibility Debt)

Story Setup: With Only a Few Days Left Before the Deadline, You Suddenly Stop Trusting Your Results

Imagine this scenario: only a few days remain before the paper deadline. After finally finishing all experiments, you are about to write the conclusions. But then doubt creeps in: perhaps a key experiment has not been validated under another data split? Perhaps a baseline was run unfairly? You decide to be cautious and rerun it.

Then the nightmare happens: after rerunning, the metrics differ from before. A method that was previously “significantly better” is suddenly no longer clearly ahead; or you find that changing the random seed makes the result drop. Cold sweat breaks out—months of work feel as if they were built on sand. With an imminent DDL (deadline) on one side and conclusions that no longer hold on the other, you are forced to overturn everything and start over.

Does this kind of “late-stage explosion” feel familiar?

Why This Happens: Three Types of Debt Explode in the Late Stage

Why is it that at the very last moment—“about to write the paper / about to defend / about to submit”—we suddenly discover that our results do not hold, and we have to overturn and redo everything?

This is not an isolated case. My observation is that it is usually not caused by a single-point bug, but by three types of debt that are tacitly accumulated early and then explode collectively later—exploration debt, validation debt, and reproducibility debt.

In other words, in order to save effort during the research process, we accumulate a great deal of “debt,” and only at the end are we forced to repay it all at once. The purpose of this book is to decompose this last-minute, fire-under-the-feet rework into small, manageable daily steps, so that the tragedy does not repeat.

Symptom Checklist: You May Be Heading Toward a Final Overturn

If the following symptoms feel familiar, you are likely accumulating research debt without realizing it, laying the groundwork for a final-stage “explosion”:

-

Your conclusions require “storytelling” to be self-consistent: You can explain why it works, but cannot clearly state under what conditions it fails; you rarely proactively discuss negative results and boundary conditions.

-

Results are extremely sensitive to environment/random seeds: Switching machines, changing a driver version, or using a different random seed causes the metrics to drift unpredictably.

-

No single “clean mainline” runs end-to-end: There are many branches, scripts, and outputs, but you cannot go from preprocessing to the paper’s main metrics with a single command.

-

Incomplete controls: Baselines are not strong enough, evaluation protocols are inconsistent, key ablations are missing, and failure cases are not explained.

-

Figures depend on manual operations: Tables and curves rely on copy-paste/manual run selection; once source data updates, everything must be redone by hand, so the closer you get to the deadline, the less you dare to touch anything.

-

You start to fear rerunning: You vaguely know that if you rerun, the results may not match your memory—or may not be recoverable at all.

The more boxes you tick, the more you are borrowing from the future: trading convenience now for high-pressure rework at the end.

A Common Plot: Why “Late-Stage Explosion” Is Almost Inevitable

Looking back at the trajectory of many projects, one finds that a “late-stage explosion” is almost inevitable. The plot often goes like this:

-

Early sprint: To see a signal as quickly as possible, you cobble together code in the fastest—but not necessarily disciplined—way: if you can edit a script directly, you do not create a new module; parameters are hard-coded; experimental outputs are scattered everywhere… In short, you just make it run first.

-

Signal appears: You see a curve that “looks good,” so you keep stacking tricks, adjusting data splits, and modifying training details to push the metric a bit higher; meanwhile, you postpone control experiments and organization work.

-

Paper pressure hits: You suddenly need reproducible main results, complete baselines, and interpretable ablation analyses; you start filling in these validations.

-

System collapse: As soon as you try to fill them in, things break: results are unstable, baselines catch up, hidden bugs or data leakage are exposed; you realize you cannot answer questions like “Is it accidental? Is there leakage? Does it only work for a particular seed?”

The key issue is not that “exploration should not be fast”—exploration can certainly be fast. But speed presupposes that what follows is cleanable and traceable. Otherwise, the faster you run, the more leverage you apply to accumulate debt, and the higher the risk of crashing at the end.

Explanatory Model: Three Types of Debt

To explain the above issues more systematically, we introduce the concept of “debt.” Similar to technical debt in software engineering, research work can also incur different forms of debt—exploration debt, validation debt, and reproducibility debt.

Exploration Debt

Definition: To iterate faster, we temporarily take nonstandard shortcuts in code and experimental workflows; the accumulated burden of these expedients is exploration debt. Exploration debt is not inherently evil, provided that it is cleanable, discardable, and recoverable.

Typical signals:

-

A proliferation of “temporary scripts”:

test.py,try1.ipynb,debug_old.py… no one dares to delete them. -

Chaotic output directories:

outputs/is filled with folders likefinal_final2/,exp_new_try/, with unclear relationships. -

The same logic is copy-pasted and modified in multiple places; the codebase feels stitched together, and any change triggers cascading effects.

Real cost: When you need to converge onto a mainline, you cannot extract a clean path: you cannot tell what the final method needs versus what is a dead end; you have also likely forgotten how certain results were obtained.

Validation Debt

Definition: To prove that something “works” as quickly as possible, we skip the control experiments and rigorous tests that should have been done; these skipped validations must be paid back sooner or later, and the closer you are to publication, the higher the cost.

Typical signals:

-

Baselines are not strong enough, or they do not share the same evaluation protocol as the method (not directly comparable).

-

Missing ablations: you do not know which change contributes the main gain.

-

Inconsistent metric definitions: thresholds/post-processing/data filtering differ across runs.

Real cost: Your claims cannot withstand scrutiny: reviewers often ask not for a “larger model,” but for “more rigorous controls and analysis.”

Reproducibility Debt

Definition: To obtain results quickly, we fail to promptly freeze the environment, data versions, hyperparameter configurations, and sources of randomness; as a result, the same code may not reproduce the same conclusion.

Typical signals:

-

You remember a run that performed very well, but cannot find the corresponding commit/config/seed/data version.

-

Dependency drift: it ran yesterday, but today installing some package breaks it or causes a major performance drop.

-

Training scripts are full of implicit defaults and hard-coded values: others (including your future self) cannot identify the key hyperparameters.

Real cost: Reproducibility debt takes away your initiative at the most critical moment: you cannot confidently answer “Are the results stable/reproducible?”, nor can you calmly handle paper checks and reproduction experiments.

Chapter Conclusion: Default Behaviors, Not Complex Tools

When people encounter these problems, they often place their hopes on more complex toolchains. This chapter emphasizes instead: what you need is not more complex tools, but a set of good default behaviors.

In the AI era, the barrier to code generation is decreasing, but the cost of validation and reproducibility has not decreased accordingly; it may even increase in relative terms because iteration is faster.

Rather than relying on heavy post hoc remediation, it is better to cultivate lightweight default habits in daily research and keep debt at a minimum:

-

Any “promising” result must be rerunnable (at least once): first confirm it is not an accidental fluctuation.

-

Any change that affects conclusions must be explainable as a controlled experimental difference: change one thing, test one thing; control variables.

-

Any exploration path must be discardable: archive what is valuable, clean what is not, and keep the mainline clean.

Principles of This Book (Ideas That Run Through Subsequent Chapters)

-

Small, verifiable steps: each iteration introduces only one interpretable variable change; prioritize ensuring the system can run and be tested.

-

Mandatory traceability: commit, config, seed, data version, and environment summary are all treated as part of the experimental artifact.

-

Stability/Exploration Isolation: The stable mainline is used to ensure paper reproducibility; the exploration branch is used for rapid trial-and-error, with linkage established via checklist-based acceptance.

10-Minute Actions You Can Do Right Now

If you do only one thing right now: create a reproducible minimal entry point for the current best result.

-

Centralize Outputs: Assign a

run_idto the best experiment and consolidate key outputs into the corresponding directory. -

Archive the Configuration: Create a minimal config file and write down the key hyperparameters.

-

Record the Fingerprint: Note the commit, seed, data version, and major dependency versions (even if handwritten for now).

-

Re-run Independently: Re-run once in a new terminal/new process using that config, and confirm the result returns to the same order of magnitude.

If it goes smoothly, you obtain a reusable template; if not, you surface problems early—there is still time to fix them now.

Subsequent chapters will expand these 10-minute actions into a sustainable Research Engineering OS.

The Smallest Unit of a Project Is Not “Code,” but an “Experiment”

In research projects, if you treat the smallest unit as “code,” you will naturally tend to use Git to organize everything: commits, branches, merges, rollbacks, and so on. This habit is highly effective in engineering development, but it often fails in research work. The reason is that the ultimate output of research is not a piece of code itself, but a verifiable conclusion or discovery. In other words, code is merely one means to reach a research conclusion; experimental results are the outcomes we truly care about.

Conclusions come from experiments. An experiment is driven by a set of traceable input conditions and produces reviewable output results. Therefore, this book defines “experiment” as the minimal unit of project operations, and organizes directory structure, logging, automation workflows, and acceptance criteria around it. By treating experiments as the fundamental unit, we can ensure that every result is well-grounded, improving the reproducibility and reliability of research.

Definition of an Experiment: Turning “I Changed Something” into a Comparable Object

Simply put, an experiment = code version + configuration + data version + environment + outputs + metrics. More plainly: any result you obtain must be able to answer “Where exactly did it come from?” In other words, whenever you “change something” in code or configuration and produce a result, that run should form an independent, comparable experimental object.

Six Questions That Must Be Answerable

To ensure traceability and comparability of experimental results, each experiment must be able to answer at least the following six key questions:

-

What code was used? - Specify the code version, such as the Git commit hash, and whether the repository had uncommitted changes at the time (dirty). This ensures we know exactly which version of the code was used to run the experiment.

-

What configuration was used? - Specify the set of configuration parameters used, such as the configuration file path and the final parameter values after parsing and expansion. Configuration determines hyperparameters and runtime options and must be recorded explicitly.

-

What data was used? - Specify the dataset version or hash, as well as how the data was split (train/validation/test split). Different data directly affects results, so the data source and version must be described precisely. Ideally, data consistency can be proven via a data hash or a manifest file.

-

What environment was used? - Describe the environment information required for execution, such as the Python version, dependency versions, driver versions, and key hardware configuration (CPU/GPU model, etc.). Environmental differences may affect reproducibility; if someone reruns the experiment elsewhere, they need to know the original environment.

-

Where are the outputs stored? - Clearly record the locations of all files produced by the experiment, such as model weights, logs, prediction results, cached intermediate features, and so on. Outputs are the basis for subsequent analysis and verification; they must be preserved and retrievable.

-

What are the metrics, and how are they computed? - Provide the metrics used for evaluation and their computation methods, including evaluation scripts and any post-processing details. Different metric conventions make results incomparable; metric definitions must be transparent and consistent.

None of these six elements can be omitted. If any one question cannot be answered, the experiment is not fully reproducible, and any conclusion drawn will lack persuasiveness. Only when all six are satisfied can we say “we know where this result came from,” and only then can we precisely reproduce it later or compare it against other experiments.

Case: AI Generates “Seemingly Complete” Code That Cannot Run

In cross-language migration (“code translation”) scenarios, API hallucination frequently occurs: the model generates functions that look reasonable but do not actually exist in the target language’s libraries, causing the generated code to be non-executable. Researchers ultimately have to rewrite and fix files one by one (see case [case:code_translation_hallucination]{reference-type=“ref” reference=“case:code_translation_hallucination”**}). If we mistakenly treat “code” as the smallest unit of a project, we may fall into the trap of focusing only on how many files or lines of code were generated. The significance of using “experiment” as the smallest unit is that our acceptance criterion is not how many files were produced, but whether we produced runnable deliverables and metrics that can be evaluated comparatively. In other words, whether an experiment succeeds does not depend on how many lines of code were changed, but on whether you obtained an executable model or result, along with clear evaluation metrics to demonstrate the effect of the change.

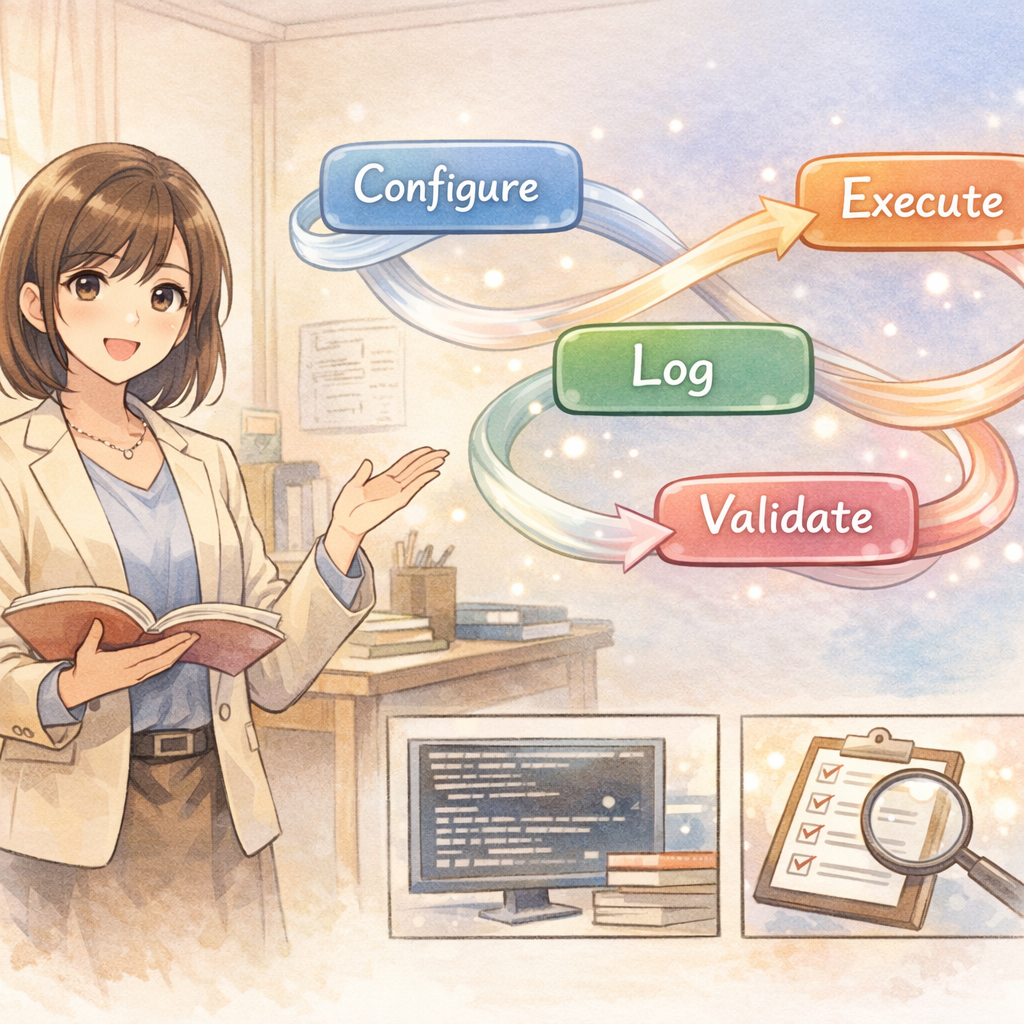

The Experimental Object Model: Decomposing the Research Process into Stable “Five Elements”

To make each stage of the research workflow clear, controllable, easy to compose, and convenient for tool automation, we can describe the elements of the experimental process using a fixed set of conceptual objects. Each experiment involves the following five objects:

-

config (configuration): All key parameter settings for a single run. It should be serializable (for saving and recording), support partial overrides (for modifying defaults), and be easy to diff. A config defines how the experiment should run-e.g., model architecture parameters, learning rate, number of training epochs-and serves as the blueprint of the experiment.

-

dataset: The data version used and the splitting strategy. The dataset object should clearly identify the data used, for example via a version number, data hash, or manifest file to prove data consistency. This ensures that different experiments share the same data basis, or that data differences are explicitly understood.

-

run: A concrete code execution process. A run typically records a unique run_id, timestamp, the commit version of the code used, the corresponding config, random seed (seed), and runtime logs. A run represents an experiment that actually occurred-the process record of putting configuration and code into practice to obtain results.

-

artifact: All output files generated by a run. For example, trained model weight files, model predictions on the test set, cached intermediate features, and intermediate data produced during evaluation are all artifacts. Artifacts are the direct outputs of an experiment; subsequent analysis, comparison, and reporting are built on them. Preserving artifacts allows us to inspect or reuse results at any time without repeating expensive computation.

-

report: A human-readable summary, including plots, tables, key conclusion statements derived from experiments, and analyses of failure cases. A report can be viewed as transforming quantitative experimental results into qualitative insights; it often aggregates metrics and artifacts from multiple runs and provides references for readers or decision-makers. It is the final form in which experimental outcomes are presented.

The relationships among these objects can be summarized in one sentence:

config + dataset + env (environment), executed by code, produces a run; that run yields several artifacts; based on artifacts and computed metrics, we write a report.

With this object model, we decompose a complex research process into several stable “noun” objects, making it easier to think and communicate. For example, when discussing an experiment, we can clearly distinguish “which config and dataset were used,” “which artifacts were produced,” and “how the final report is written.” More importantly, this partitioning lays the foundation for subsequent tooling: we can define conventions for each object type (e.g., managing config with YAML files, storing artifacts in specific directories), thereby standardizing and automating the experimental workflow.

run_id: Making Every Run Unambiguously Referable

When writing a paper or report, you may need to frequently cite results produced under a specific configuration, e.g., “our best result under a certain setting is …”. If that experiment does not have a stable and unambiguous name, your description will be vague, which hinders reader understanding and can easily lead to confusion across experiments. To avoid this, we recommend generating a unique run_id for every run, and making this ID as readable and time-ordered as possible.

A practical approach is to combine a timestamp with a short description to form an ordered and interpretable name. For example, you can name runs by date and start time, then add a brief summary of the experiment:

2026-02-01_0930_baseline (baseline experiment started at 09:30 on Feb 01, 2026)

2026-02-01_1130_ablation_noaug (ablation experiment started at 11:30 on Feb 01, 2026 - removing data augmentation)

2026-02-02_0045_sweep_lr3e-4 (hyperparameter grid experiment started at 00:45 on Feb 02, 2026 - learning rate 3e-4)

With this naming convention, lexicographic ordering of files corresponds to chronological ordering, making it easy to see the sequence and approximate content at a glance. Each run_id is both unique and readable, avoiding vague and non-comparable names such as “experiment1” and “experiment2”.

For file organization, you can use the run_id as the directory name and centrally store all outputs of that experiment. For example:

outputs/<run_id>/

run.json # Metadata about this run (e.g., code version, start time, parameter configuration)

run.md # Optional: a log recording the description, observations, and preliminary conclusions for this run

metrics.json # Metric results for this experiment

artifacts/ # Subfolder containing models, predictions, and other files produced by this run

With this structure, we can conveniently manage and query experimental results. For instance, when you want to compare multiple experiments, you can directly open metrics.json under the corresponding run_id directory to inspect metrics, or load models from artifacts for analysis.

Avoiding “final” chaos:

Many people like to use the word “final” when naming experiments, but a common situation is that after completing “experiment_final” they discover a small improvement is needed, leading to “experiment_final_v2” or even “final_final”. In the end, even the author cannot tell which one is truly the final result, causing confusion and misunderstanding. This is a typical consequence of non-standard naming. With the run_id approach, you no longer rely on such vague labels to mark the final outcome; instead, you identify each attempt with clear time and content descriptors. As for which experiment is ultimately adopted, you can simply state in the report which run_id is used. In short, do not let words like “final” interfere with experiment management; with a unified run naming scheme, every experiment is well-grounded-run N is run N-and confusion disappears.

A practical principle: put “comparability” as the top priority

In research, what often determines the pace of progress is not the physical time spent training models, but the repetition and uncertainty caused by a lack of comparability across experiments. If experimental conditions are inconsistent, then even after you obtain numerical results, it is difficult to determine where differences come from, and you may even end up discarding and redoing work. Common negative examples include:

-

Inconsistent evaluation criteria: The baseline and your new method use different evaluation scripts, resulting in different measurement conventions and making direct comparison impossible. This forces you to spend extra time re-evaluating both under the same standard.

-

Inconsistent post-processing: Experiment A and Experiment B use different post-processing or filtering strategies, causing metrics to be on different scales. For example, one result applies additional threshold filtering while the other does not; without unified processing, it is hard to clearly argue which method is better.

-

Inconsistent data splits: Experiment B temporarily switched datasets or splitting schemes without recording it; comparing its results with Experiment A is then inherently unfair-B may have used an easier test set while claiming superior performance. In such cases, even “better” results are meaningless because the comparison is not made on the same baseline.

For these reasons, we should always keep in mind: any important conclusion must come from the same evaluation pipeline, ensuring comparability between experiments. That is, when comparing two experiments, aside from the intentionally changed variable (e.g., model architecture, hyperparameters), all other components-data, evaluation criteria, post-processing methods, random seeds, etc.-should be kept as consistent as possible or at least be traceable. Once an inconsistency is found, either correct it in a new experiment and rerun, or explicitly document the differences in the report and avoid direct comparison.

Prioritizing comparability may require additional effort to align conditions when designing experiments, but it actually accelerates overall research progress. You avoid repeated trials and debates caused by unfair comparisons, and the conclusions from a single experiment become genuinely defensible and withstand scrutiny.

Starting from this chapter, all subsequent chapters-including repository structure, Git workflow, DoD (Definition of Done), logging practices, and AI-assisted workflows-will revolve around two core goals: “experiment traceability” and “result comparability”. The principles established in this chapter will run through the entire methodology of research management.

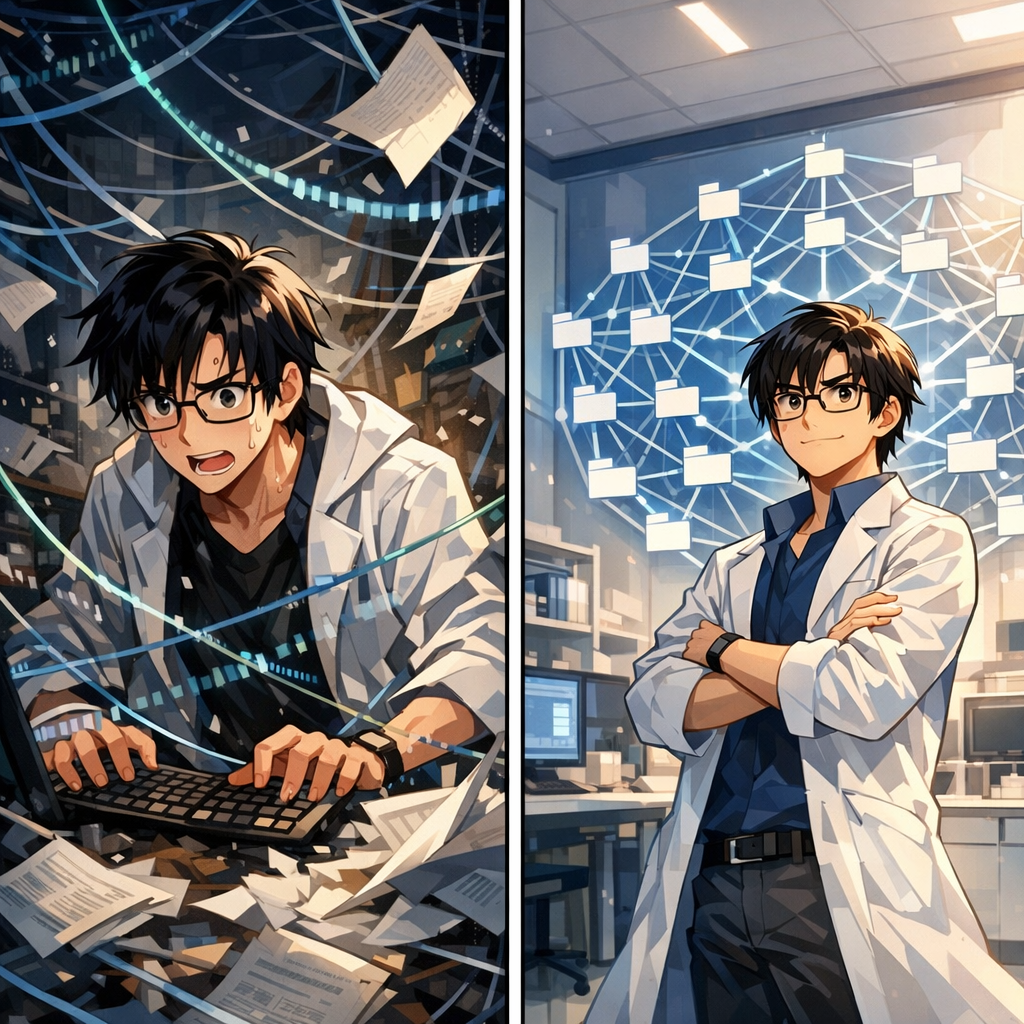

Your Repository Structure Is Your Second Brain

The “messiness” of research code is often not due to lack of ability, but because research is inherently parallel exploration: at the same time, you must maintain multiple hypotheses, multiple implementations, multiple experimental entry points, multiple outputs, and multiple plots.

When these things are piled together without structure, your brain is forced to act as an index: Which script still runs? Which output is trustworthy? Which change affected the metrics?

The purpose of a repository structure is not to look nice, but to reduce cognitive load: so that you can judge—without relying on memory—“Can this code be deleted? Is this output reliable? Is this path reproducible?”

Real Case: The Cost of Rapidly Piling Up Code

When I first started using AI coding assistants, I learned this lesson the hard way. To quickly validate an idea, I had Copilot generate a large amount of “runnable” code—data loading, model definitions, training loops, evaluation scripts, and so on. Within a few hours, I had built what looked like a complete framework.

Early “success”:

The code did run, and the experiments produced results. Excited, I continued iterating, repeatedly asking the AI to add new features: data augmentation, different model variants, various evaluation metrics… Each change had an “immediate effect,” and the codebase expanded rapidly.

The beginning of the collapse:

Two weeks later, when I needed to prepare ablation and comparison experiments for a paper, the problems surfaced:

Two weeks later, when I needed to prepare ablation and comparison experiments for a paper, the problems surfaced:

-

I could not tell which script was the latest and which was obsolete;

-

The same data-loading logic had been copy-pasted into five different files, each with slight differences;

-

The baseline and the new method used different evaluation code, so the results were not comparable at all;

-

I wanted to reproduce a “very good result,” but could not find the configuration and data version used at the time.

Starting over:

In the end, I had to stop all new experiments and spend three full days rewriting almost all the code. This rewrite was not because the AI-generated code had bugs, but because of a lack of structure: reusable core logic and one-off experimental scripts were mixed together; quick-and-dirty trial code was not cleaned up in time; outputs were scattered everywhere and hard to trace.

This experience made me deeply understand: AI can help you produce code quickly, but the structure must be designed by humans. If, from the beginning, you separate “stable” from “exploratory,” and organize outputs by run_id, you will not fall into this kind of chaos later.

Case references:

This is not an isolated issue. When you use AI to quickly pile up a “runnable” repository but fail to isolate reusable code from one-off experimental entry points, the common ending is: every module must be rewritten, and almost all AI-generated fragments are replaced. Structure is the first line of defense against this kind of rework.

A Copy-and-Paste Directory Layout (Research-Friendly)

repo/

src/ # Core library: reusable, testable, maintainable (slow variables)

experiments/ # Experimental entry points: one-off glue code (fast variables, disposable)

configs/ # Unified configuration: yaml/json (diffable, traceable)

data/ # Only pointers or small samples; manage large data externally

outputs/ # Run artifacts: organized by run_id (cleanable/archivable)

reports/ # Paper figures and conclusions: auto-generated from outputs

scripts/ # Utility scripts for data prep/download/evaluation, etc.

tests/ # Unit tests + smoke tests (hold the line)

Makefile # Common entry points: train/eval/reproduce/test

README.md

CLAUDE.md # AI coding rules (recommended)

Fast Variables vs. Slow Variables: Separate “Stability” from “Exploration”

It is recommended to divide the contents of a repository into two categories:

It is recommended to divide the contents of a repository into two categories:

-

Slow variables (stable): parts that will be maintained long-term, reused repeatedly, and require test coverage.

-

Fast variables (explore): entry scripts for quickly testing a hypothesis, short-lived glue, and one-off analyses.

In this book’s terminology: src/ contains slow variables, and experiments/ contains fast variables.

Rule of thumb: exploration can be dirty, but the core library must be clean; exploration can be fast, but evaluation must be stable.

Why is this separation so important?

In my rewrite experience, the biggest pain point was being unable to distinguish assets from consumables. When all code is mixed together, you dare not delete anything (for fear of removing important functionality), and you also dare not refactor aggressively (for fear of affecting other experiments). Once you clearly define src/ as assets and experiments/ as consumables, the psychological burden is greatly reduced:

-

Changes to

src/must be made cautiously and require tests; -

Changes to

experiments/can be made freely—after the trial, delete it.

Definition of Done (DoD) for Each Directory

A directory name only truly reduces chaos when “what should go in” and “what should not go in” are sufficiently clear.

src/: Core Library (Reusable, Testable)

-

Store reusable modules: data loading, model components, losses, evaluation, general utilities.

-

Must be testable: at minimum, have smoke tests covering key pipelines.

-

No hard-coding: do not include paths/parameters that are only useful for a particular run.

Anti-example:

In my rewrite case, the original “data loading” code hard-coded the path and preprocessing for a specific experiment, forcing new experiments to copy-paste and modify it. If the paths and parameters had been passed in as function arguments from the start, this problem would not have occurred.

experiments/: Experimental Entry Points (Disposable)

-

Store only entry points and glue: short-lived is allowed; delete after use.

-

Any logic proven valuable and reusable should be migrated to

src/once it stabilizes.

Practical advice:

Name each experiment script by date or run_id, e.g., 2026-02-01_baseline.py. This makes it immediately obvious which experiments are old and which are new. Regularly (e.g., weekly) clean up scripts older than one month that have no value.

configs/: Configuration (Traceable)

-

Every “paper-candidate conclusion” must correspond to a config (or a traceable way to generate it).

-

A config must expand to the final parameters (avoid drift in default values).

outputs/: Artifacts (Cleanable/Archivable)

-

Store only run artifacts, and organize them by run_id.

-

No overwriting: do not reuse or manually modify the same run_id directory.

-

Archive important artifacts: move them into long-term storage; the repository should not carry large binaries.

reports/: Figures and Conclusions (Regenerable)

-

As much as possible, store only script-generated figures/tables and draft conclusions.

-

All figures and tables in the paper must be reproducible from

outputs/, avoiding manual drag-and-drop.

tests/: Testing (Hold the Line)

-

At least one 1–3 minute smoke test: run through data loading $\rightarrow$ forward pass $\rightarrow$ loss $\rightarrow$ evaluation.

-

Add assertions for critical functions: shape, NaN, value ranges, signals of data leakage, etc.

Converge Entry Points: Make “How to Run” Obvious

The most common waste in research is that others (including your future self) do not know how to run the code.

It is recommended to converge all commonly used entry points into a Makefile (or an equivalent task tool):

-

make test -

make train CONFIG=... -

make eval RUN=... -

make reproduce RUN=...

When entry points are few enough and stable enough, you can keep complexity internal while exposing reproducibility externally.

Progressive Refactoring: Migrating from a Messy Project to a Sound Structure

If you already have a “messy” legacy project, do not try to tear it down and rebuild everything at once. Below is a step-by-step refactoring process:

Step 1: Identify and Separate Slow Variables vs. Fast Variables

-

Review the existing code: Scan the entire project and mark which modules are core functionality (to be maintained long-term and reused repeatedly) and which are one-off experimental scripts.

-

Create new directories:

-

Create

src/and migrate modules that you are sure will be reused into it; -

Create

experiments/and move assorted run scripts and temporary code into it.

- Complete a coarse layering: After this step, the project structure will begin to look clearer, and the indexing burden in your head will be reduced accordingly. Perfection is not required; simply separate the obvious parts first.

Step 2: Extract Configurations and Parameters

-

Find hard-coded values: Scan the code and identify all hard-coded key parameters (learning rate, batch size, paths, etc.).

-

Create configuration files: Under

configs/, create YAML or JSON files to manage parameters centrally. -

Replace incrementally: Do not modify all code at once. Instead, refactor one script at a time, and proceed to the next only after confirming it runs correctly.

Practical experience:

During my refactoring, extracting configurations alone helped me uncover three hidden bugs—the “seemingly identical” parameters in different scripts actually had different values, making results incomparable.

Step 3: Standardize Output Paths

-

Define a convention: Decide to use the

outputs/<run_id>/structure. -

Modify the code: Adjust all experiment entry points so that outputs are archived by run_id.

-

Clean up old outputs: Organize or delete scattered legacy output files to keep the outputs directory clean.

Step 4: Add Basic Tests

-

Write a smoke test: Create a 1–3 minute quick test to verify that the core pipeline runs end-to-end.

-

Run after each refactor: Ensure changes do not break basic functionality.

-

Increase coverage gradually: As refactoring progresses, gradually add unit tests for critical functions.

Key principle:

Progressiveness matters. Do not attempt to finish all refactoring in one go; instead, ensure existing functionality remains intact at each step before moving on. My rewrite took three days, but if I had adopted progressive refactoring from the start, it could have been spread over a week without affecting the normal pace of experiments.

Directory Hierarchy Management for Multi-Task / Multi-Project Work

When facing multiple related but independent research tasks, how to organize directories becomes an important question.

Principle: Prefer Separate Repositories

Best practice: Use an independent code repository for each research project. This helps to:

-

Avoid dependency conflicts across projects;

-

Ensure independence in version control;

-

Simplify reproduction (each project has its own environment and dependencies).

Rule of thumb:

If two projects differ substantially in dependency versions, datasets, or runtime environments, strongly consider splitting into separate repositories.

Layered Structure for Multiple Projects in a Single Repository

If you truly need to manage multiple related tasks within one repository (e.g., multiple experiments for the same paper, or subprojects that share a large amount of code), you can adopt the following structure:

repo/

projectA/

src/

experiments/

configs/

outputs/

README.md

projectB/

src/

experiments/

configs/

outputs/

README.md

common/

src/ # Core code shared across projects

tests/ # Tests for shared functionality

scripts/ # General-purpose utility scripts

README.md # Overall description

Makefile # Cross-project common commands

Naming Conventions and Environment Isolation

-

Configuration file naming: Use project prefixes, e.g.,

configs/projectA_baseline.yaml,configs/projectB_ablation.yaml. -

Output directories: You may maintain outputs within each project subdirectory, or unify them at the repository root while using the project name as a prefix:

outputs/projectA/<run_id>/. -

Environment management: Even if the code lives in one repository, it is still recommended to maintain separate virtual environments or Docker containers for different projects to prevent dependency conflicts.

When to Share Code vs. When to Copy Code

When to share code into common/:

-

Basic utility functions needed by multiple projects;

-

General data loading or preprocessing logic;

-

Standardized evaluation metric computation.

When to copy code:

-

The code is still changing rapidly and requirements may diverge across projects;

-

Sharing would cause excessive coupling and harm independence;

-

The project is nearing completion and future synchronized updates are unlikely.

Practical recommendation:

At the beginning, prefer copying; extract into common/ only after the code is truly stable and you are confident it needs to be shared. Premature abstraction leads to frequent modifications of shared code and increases maintenance burden.

Quick Start: Use AI to Generate Your Repository Template

To speed up this process, your advisor suggests using an AI assistant. Simply describe the directory structure and files you need—for example, in one sentence to Claude:

“Generate a standard research project template with directories: src/, experiments/, configs/, outputs/, data/, reports/, scripts/, tests/. Include a README.md explaining each directory’s purpose and a Makefile with targets for test, train, eval, and reproduce.”

Within a minute, a complete project structure with all essential files is generated. You now have a solid foundation before writing a single line of code—a perfect example of “standing on the shoulders of giants.” Going forward, you decide to initialize every new project this way.

Why This Matters

Using AI to generate boilerplate is not lazy; it is structural wisdom. By outsourcing the tedious template creation, you ensure every project starts with best practices. The human effort is then focused on the unique science, not on redoing infrastructure.

A 10-Minute Action: “Layer Once” Your Current Project

If you do only one thing right now: roughly split the current repository into slow variables and fast variables.

-

Create

src/and move in modules you are sure will be reused. -

Create

experiments/and move all entry scripts into it, allowing them to be short-lived. -

Create

configs/and extract key parameters from scripts. -

Standardize all outputs into

outputs/<run_id>/.

You will immediately feel an increase in “controllability,” because you begin to distinguish what is an asset versus what is a one-off consumable.

From personal experience:

If I had established this structure when I first started using an AI coding assistant, the three-day rewrite could have been entirely avoided. A good structure is not for aesthetics; it is to stop forcing your brain to remember every detail, and to make the repository itself your reliable “second brain.”

Git Is Not for “Saving Code”; It Is for “Proving History”

Story Setup: Reviewers Ask for Reproducibility, but You Can’t Find the Code from Back Then

Three months after submitting your paper, the reviews arrive. One comment is blunt: “Please provide the code and data; we would like to reproduce the results in Table 3.”

Three months after submitting your paper, the reviews arrive. One comment is blunt: “Please provide the code and data; we would like to reproduce the results in Table 3.”

Your heart sinks-you quickly open the repository. But what you see makes your back go cold:

- The Git history contains only a handful of commits: “initial commit,” “update,” “fix bug,” “final version”;

- The results in the paper were produced three months ago, and you can no longer remember which version of the code was used;

- The code directory contains multiple versions of the training script:

train.py,train_v2.py,train_final.py, and you are not sure which one was used; - Worse still, you realize that you recently refactored the model code heavily for new experiments, and the current version can no longer reproduce the numbers reported in the paper.

You can only reply stiffly: “We are organizing the code and will provide it as soon as possible.” Then begins the painful “archaeology”-trying to reconstruct the code state from memory, chat logs, and experiment notes.

Does this scenario feel familiar?

Why “Casual Commits” Won’t Save You

Many people think they are using Git, but in practice they treat it as a “cloud drive”:

- They change a lot of code and commit everything at once, with a message like “update”;

- They never use branches; all changes accumulate on

main; - They only remember to commit after an experiment finishes-by then the code has already changed again;

- The commit history provides no clue as to “which version corresponds to which experimental result.”

The problem with this workflow is that you lose Git’s most essential value-the ability to serve as a “historical proof tool.”

In engineering, Git is primarily used for collaboration and rollback. In research, Git’s core value is proof:

- Proving which version of the code produced a given result;

- Proving that every experiment in the paper corresponds to a specific code version;

- Proving that you can return to any historical version and reproduce the same results.

Git Pitfalls in Research

Pitfall 1: Commits Are Too Coarse-Grained, and Key Changes Become Untraceable

Symptom: A single commit includes changes across a dozen files, spanning data processing, model architecture, training pipeline, and more. The commit message says only “improve model.”

Consequences:

- You cannot identify which change caused a metric to shift;

- When you want to roll back a faulty change, you find you cannot undo it in isolation;

- Months later, you cannot remember what the commit actually did.

Correct practice:

- Each commit should contain one logical change only;

- Commit messages should clearly state “what changed” and “why”;

- Follow the “atomicity principle”: every commit should keep the code in a runnable state.

Pitfall 2: Misalignment Between Experiment Timing and Code Changes

Symptom: You modify the code and run experiments first; the results look good; you commit two days later. Or you commit, then temporarily tweak a few parameters and rerun.

Consequences:

- The code version (commit) that produced the results does not actually match;

- Others (including your future self) attempt reproduction using the commit hash and obtain different results;

- When reviewers request reproduction, you cannot find the exact code version at all.

Correct practice:

- Commit first, then run the experiment;

- For each experiment, record the commit hash and dirty status in

run.json; - If you make temporary code changes, either recommit or document the dirty modifications in the run record.

Pitfall 3: Improper Branch Usage Leads to a Chaotic Mainline

Symptom: All experiments are conducted on the

Symptom: All experiments are conducted on the main branch, mixing exploratory changes with stable code; or you create many branches but never clean them up, resulting in a tangled branch structure.

Consequences:

- The

mainbranch becomes unstable and filled with experimental code; - When you need the “paper reproduction version,” you do not know which branch to use;

- Too many branches leave team members unsure which branch to base new work on.

A Git Branching Strategy Suitable for Research

Unlike engineering projects, a research project’s branching strategy must balance two needs:

Unlike engineering projects, a research project’s branching strategy must balance two needs:

- Stability: the paper’s results must be supported by a clean, stable code version;

- Exploration: new ideas require rapid trial-and-error and should not be constrained by heavy process.

Recommended Branch Structure

main (or stable):

- Accept only validated changes

- Every merge must pass the DoD check (see Chapter 5)

- Ensure the paper results are reproducible at any time

exp/<hypothesis-name>:

- One branch per experimental hypothesis

- Use clear names: exp/attention-ablation, exp/data-augmentation

- Short-lived branches: merge or delete after validation

- Allow "dirty" rapid iteration

archive/<paper-version>:

- Archive branches for key milestones such as submission and publication

- Created from main; never merged back

- Kept permanently to ensure traceability

Typical Workflow

Scenario 1: Validating a New Hypothesis

-

Create a new branch from main:

git checkout -b exp/new-loss-function -

Iterate quickly and trial-and-error on the branch; commits can be informal

-

After obtaining promising results, clean up the code

-

Create standardized experiment records (config +

run.json) -

Merge back into main:

git checkout main && git merge exp/new-loss-function -

Delete the experiment branch:

git branch -d exp/new-loss-function

Scenario 2: Paper Submission

-

Ensure all paper experiments on main are reproducible

-

Create an archive branch:

git checkout -b archive/icml2026-v1 -

Create a tag on main:

git tag -a paper-icml2026-v1 -m "ICML 2026 submission version" -

Push the tag:

git push origin paper-icml2026-v1

Scenario 3: Exploring Multiple Directions in Parallel

- Create multiple experiment branches simultaneously:

-

exp/architecture-search -

exp/data-augmentation -

exp/loss-function

-

Advance each branch independently without interfering with others

-

Manage each branch’s experimental artifacts using an independent run_id

-

Merge valuable changes back into main one by one

-

Delete branches with no value directly

Mark Milestones with Tags: Make Paper Results Permanently Traceable

Tags are a severely underestimated feature in Git. For research projects, the value of tags lies in:

Tags are a severely underestimated feature in Git. For research projects, the value of tags lies in:

-

Assigning permanent markers to every key version of the paper;

-

Even as the main branch continues to evolve, you can precisely return to historical versions;

-

Provide clear version naming to facilitate citation and reproduction.

Recommended Tag Naming Conventions

# Paper versions

paper-<venue>-<version>

e.g.: paper-icml2026-v1, paper-icml2026-revision

# Experiment groups

exp-<experiment-name>

e.g.: exp-ablation-study, exp-baseline-comparison

# Primary results

result-<result-name>

e.g.: result-table3-main, result-fig2-comparison

# Milestones

milestone-<description>

e.g.: milestone-first-sota, milestone-reproducible-baseline

Tag Usage Practices

Tag each important experiment for the paper:

# Tag immediately after finishing the main experiment

git tag -a result-main-experiment -m

# Tag immediately after finishing the main experiment

git tag -a result-main-experiment -m

“Main results reported in Table 2, config: configs/main.yaml”

# Record key information in the tag message

git tag -a result-ablation-study -m \

"Ablation study results (Table 3)

Run IDs: 2026-02-01_1030_ablation_*

Config: configs/ablation_*.yaml

Key finding: attention mechanism contributes 5% improvement"

When reproducing, switch directly to the tag:

# List all experiment-related tags

git tag -l "result-*"

# Switch to a specific experiment version

git checkout result-main-experiment

# Reproduce the experiment

make reproduce CONFIG=configs/main.yaml

Do Not Commit Experimental Artifacts to Git: Keep the Repository Clean with .gitignore

Core principle: Git manages source code and configuration, not experimental artifacts.

Core principle: Git manages source code and configuration, not experimental artifacts.

What Should Not Be Committed to Git

-

Model weights: usually large (hundreds of MB to several GB); use dedicated model management tools (e.g., DVC, Git LFS, or cloud storage).

-

Training logs: all run artifacts under outputs/, organized by run_id and then archived or cleaned up.

-

Intermediate data: cached features, preprocessing outputs, etc.; these should be regenerable.

-

Datasets: raw data is typically managed externally; only keep small samples or data pointers (manifests, download scripts) under data/.

-

Virtual environments: directories such as venv/ and .conda/; use requirements.txt or environment.yaml instead.

Recommended .gitignore Template

# Python

__pycache__/

*.py[cod]

*$py.class

*.so

.Python

# Virtual environments

venv/

env/

.conda/

# Experimental artifacts

outputs/

runs/

checkpoints/

*.pt

*.pth

*.ckpt

*.h5

# Data (unless it is a small sample)

data/raw/

data/processed/

*.csv

*.parquet

# Logs

*.log

logs/

wandb/

# Temporary files

.DS_Store

*.swp

*.swo

*~

# IDE

.vscode/

.idea/

*.iml

# Exceptions: keep small sample data and configurations

!data/samples/

!configs/

Frequently Asked Questions and Solutions

Q1: The code has already changed a lot-how can I recover?

If your repository history is already very messy, do not try to “rewrite history” (unless you are very familiar with Git rebase). The recommended approach is:

-

Set a baseline point: tag the current state:

git tag baseline-before-cleanup -

Start enforcing conventions from now on:

-

Use an independent branch for each new experiment

-

Keep each commit atomic

-

Tag important results immediately

- Fix historical issues incrementally:

-

Identify the code versions corresponding to key paper experiments and add tags retroactively

-

Record the “mapping between historical versions” in the README or documentation

-

Use the standardized workflow for new experiments; trace old experiments as much as possible

Q2: How do we unify the branching strategy in team collaboration?

-

Write it into the README: document branch naming conventions and tag usage.

-

Set protection rules: on GitHub/GitLab, protect the main branch; forbid direct pushes and require PR/MR.

-

Code Review: before merging into main, check whether the DoD (Chapter 5) is satisfied and whether there is a complete experiment record.

-

Regular cleanup: hold a weekly meeting to collectively remove useless experiment branches and archive important tags.

Q3: How should we handle experiments in a “dirty” state?

Sometimes you temporarily modify code to run an experiment but have not had time to commit; this is a “dirty” state.

Recording strategy:

-

Record

"git_dirty": truein run.json -

Also record the diff:

git diff > outputs/<run_id>/changes.patch -

In run.md, note the temporary changes and the reasons

Post hoc remediation:

-

If the results are valuable, commit the changes immediately and add a tag

-

If it is only a temporary trial, recording it in run.md is sufficient; no need to commit

Practical Case: From Chaos to a Clear Git History

Before Refactoring (Negative Example)

* a3f2d1c (HEAD -> main) update

* f8d9e0a fix

* 1b2c3d4 add new feature

* 9e8d7f6 initial commit

No useful information can be inferred from the history, and none of the paper experiments can be matched to a corresponding version.

After Refactoring (Positive Example)

- d1e2f3g (tag: paper-icml2026-v1, main) Merge exp/final-ablation

| Paper results ready for submission

|

| * c4d5e6f (exp/final-ablation) Add ablation study for attention | * b3c4d5e Configure ablation experiments |/- a1b2c3d (tag: result-main-experiment) Main experiment: achieve 95.2% accuracy Run ID: 2026-02-01_1030_main_run Config: configs/main_experiment.yaml

- 9a8b7c6 (tag: milestone-baseline) Establish reproducible baseline All baseline experiments validated

- 8f7e6d5 Fix data preprocessing bug in train/val split

- 7e6d5c4 Add comprehensive smoke test

- 6d5c4b3 Refactor data loading module

A clear history: every critical milestone is tagged, enabling rollback at any time.

10-Minute Action: Establish a Git Baseline for the Current Project

If you do only one thing right now: establish a clear Git baseline for your project.

-

Check the current status:

git status git log --oneline -10 -

If there are uncommitted changes, decide how to handle them:

-

Valuable changes: clean them up and commit, with a clear message

-

Temporary experiments: record them in run.md, then

git stash -

Useless changes: revert with

git checkout .

-

Create a baseline tag for the current stable version:

git tag -a baseline-$(date +%Y%m%d) -m \ "Baseline before implementing git workflow" -

Set up a well-structured .gitignore:

# Use the template provided earlier curl -o .gitignore <template link> # Or create it manually git add .gitignore git commit -m "Add comprehensive .gitignore for research project" -

Document branch naming conventions: Add a section titled “Git Workflow” to README.md and record:

-

The purpose of the main branch

-

Naming conventions for exp/ branches

-

How to use tag

From this point onward, follow the conventions for branches and tag for every new experiment, so that Git truly becomes your “tool for proving history.”

From “It Runs” to “Trustworthy”: Only a Definition of Done Away

Story: The Cost of “Good Enough”

At 2 a.m., you finally get a “pretty good-looking” result-test accuracy 94.3%, 3 percentage points higher than the baseline. Excited, you take a screenshot and post it to the team chat: “The new method works!”

At 2 a.m., you finally get a “pretty good-looking” result-test accuracy 94.3%, 3 percentage points higher than the baseline. Excited, you take a screenshot and post it to the team chat: “The new method works!”

Three days later, when you are ready to write the paper, you want to rerun the experiment to verify the result. You open the code and hesitate:

- Which configuration file did I use? There are three similar yaml files; I can’t remember.

- Which version of the data did I use? I think I temporarily changed the split once.

- What was the random seed? I forgot to record it.

- Was the code committed at the time? Or were there temporary local edits?

You bite the bullet and rerun it. The result comes out: 92.7%. That is 1.6 percentage points lower than before.

Your heart sinks-so which run is correct? Or are both unreliable?

The problem in this scenario is: you do not have a clear standard for judging whether “an experiment is done.”

In software engineering, there is a concept called Definition of Done (DoD)-the definition of completion. It answers: “When can I say this task is truly finished?”

In research, we also need a DoD, but with different standards:

- Engineering DoD: the code runs, tests pass, documentation is complete.

- Research DoD: results are trustworthy, reproducible, and comparable.

Why “It Runs” ≠ “Done”

In research, there are many situations that “look finished but actually plant landmines”:

Landmine 1: “Got a result” but cannot reproduce it

Symptom: You see a good result, but lack complete records of the environment, configuration, and data version. A few days later, you try to rerun it and the numbers do not match.

Symptom: You see a good result, but lack complete records of the environment, configuration, and data version. A few days later, you try to rerun it and the numbers do not match.

Real cost:

- You panic when reviewers ask for reproducibility;

- Teammates cannot build on your results;

- Worst case: the paper is rejected because the “results are not reproducible.”

Landmine 2: “Improvement works” but you do not know why

Symptom: You changed three things at once. The result did improve, but you do not know which change mattered, and you did not run ablation experiments.

Real cost:

- You cannot answer when reviewers ask for a mechanistic explanation;

- You do not know what to keep or discard in subsequent iterations;

- You may mistakenly treat ineffective or even harmful changes as the key contribution.

Landmine 3: “Comparative experiments” but inconsistent evaluation protocol

Symptom: Your method and the baseline use different evaluation scripts, or different post-processing. Your method appears better, but the comparison is actually unfair.

Symptom: Your method and the baseline use different evaluation scripts, or different post-processing. Your method appears better, but the comparison is actually unfair.

Real cost:

- Reviewers point out the unfair evaluation and ask you to redo it;

- After rerunning, the advantage disappears;

- You waste substantial time “equalizing” the evaluation.

DoD Checklist for Paper-Candidate Conclusions

The following checklist applies to any experimental result that “might go into the paper.” It is recommended to paste it verbatim into the project README as a team consensus.

Minimal DoD (5 mandatory items)

-

Reproduce the primary metric with a single command

# For example: make reproduce RUN=2026-02-01_1030_main_experiment # Or: python reproduce.py --run_id=2026-02-01_1030_main_experimentStarting from scratch (given the environment and data), it must be possible to reproduce the metrics reported in the paper with a single command (allowing minor fluctuations).

-

Complete run records

Each important experiment must have complete run records, including at least:

-

Git commit hash (code version)

-

Config file path and content (all hyperparameters)

-

Random seed

-

Data version (dataset version number, hash, or manifest file)

-

Environment summary (Python version, key library versions, GPU model, etc.)

A recommended format is the

run.jsontemplate in Chapter 6.

-

Baseline and ablation use the same evaluation script

All comparative experiments must:

-

Use exactly the same evaluation code;

-

Use exactly the same data split;

-

Use exactly the same post-processing;

-

Use consistent metric computation logic (e.g., the same thresholds and the same averaging scheme).

Acceptance criterion: you can point to a single unified evaluation script, and all methods’ metrics come from that script.

-

At least one smoke test runs in 1-3 minutes

A smoke test is a quick test that validates the core pipeline, aiming to catch obvious errors as early as possible.

Key components that must be covered:

-

Data loading (can read correctly and return data with correct shapes)

-

Model forward pass (does not crash; output shapes are correct)

-

Loss computation (values are reasonable; no NaN)

-

Evaluation pipeline (metrics are computed correctly)

Implementation suggestion: use a tiny data subset (e.g., 10 samples), run 2-3 iterations, and ensure the end-to-end pipeline is intact.

-

Figures are generated by scripts, not manual drag-and-drop

All figures in the paper must be automatically generated from the raw data in

outputs/.Prohibited practices:

-

Manually copying numbers from logs into Excel;

-

Manually adjusting chart styles and then taking screenshots;

-

Being unable to locate the source data files for figures.

Recommended practices:

-

Place generation scripts in the

reports/directory (e.g.,plot_main_results.py); -

Scripts read

outputs/<run_id>/metrics.jsonand generate figures; -

Save figures in an editable format (e.g., PDF) and save source data (e.g., CSV);

-

Add

make plotsto the Makefile to generate all figures with one command.

Enhanced DoD (recommended additional items)

After meeting the minimal DoD, the following items can further improve result credibility:

After meeting the minimal DoD, the following items can further improve result credibility:

-

Statistics over multiple runs

For experiments with substantial randomness, a single run is insufficient to demonstrate effectiveness. Recommended:

- Run with at least 3-5 different random seeds;

- Report mean and standard deviation (or confidence intervals);

- List the run_id corresponding to each seed in the run records.

-

Failure case analysis

Honestly document the limitations of the method:

-

Under what conditions does the method perform poorly?

-

Are there clear failure examples?

-

How sensitive is it to hyperparameters?

This not only increases credibility but also points the way for future improvements.

-

Complete ablation study

For methods that include multiple improvements, an ablation study must answer:

- How much does each improvement contribute on its own?

- Which improvements are critical and which are marginal?

- Are there interaction effects among improvements?

-

Code quality checks

Use automated tools to check code quality:

-

Linter (e.g., flake8, pylint): code style checks

-

Type checker (e.g., mypy): type checking

-

Unit test coverage: key functions must have tests

Run these checks automatically in CI.

-

Data leakage checks

Ensure strict separation between the training set and the test set:

-

Print sample counts before splitting and verify totals are consistent;

-

Check whether the training and test sets overlap (using sample IDs or hashes);

-

Time-series data: ensure the test set is temporally later than the training set.

DoD Checklist: Operational Steps from “It Runs” to “It’s Trustworthy”

Do Immediately After Finishing an Experiment (5 minutes)

-

Record run information

# Automatically generate run.json (see Chapter 6 tools) python log_run.py --run_id `<id>` -

Manually write key information in run.md (no more than 5 lines)

- What is the hypothesis of this experiment?

- What are the main changes?

- What are the results (summarize in one sentence)?

- What is the next step?

- Are there any noteworthy risks or anomalies?

Do Before Starting to Write the Paper (30 minutes)

-

Reproducibility verification

Run the reproduction command in a new terminal (or a new environment):

make reproduce RUN=<run_id of the paper-candidate result>Check:

- Does it run smoothly (without errors)?

- Are the results within a reasonable range (difference no more than 1-2% or one standard deviation)?

- If the discrepancy is large, investigate the cause (environment, data, randomness).

-

Comparative experiment check

Check all methods to be compared:

ls outputs/ # Find all baseline and ablation run_idConfirm:

-

Did they use the same evaluation script? (This can be confirmed via the script path or hash in run.json.)

-

Is the data split consistent?

-

Are the evaluation parameters consistent?

If inconsistencies are found, you must rerun some experiments to unify the evaluation protocol.

-

Run smoke tests

make test # Or: pytest tests/test_smoke.pyEnsure the core pipeline has not been broken by subsequent changes.

-

Generate plots

make plotsCheck the generated plots:

- Do they reflect the latest experimental results?

- Are the numbers consistent with the run records?

- Are the axes and legends clear?

Do Before Paper Submission (1 hour)

-

Completeness self-check

Cross-check the DoD list item by item:

- Every experiment cited in the paper has a run_id

- Every run_id has a complete run.json

- All comparative experiments use the same evaluation script

- Smoke tests pass

- All plots can be generated via scripts

-

Create Git tags for key experiments

# Main experiment git tag -a result-main-table2 -m \ "Main results in Table 2, run_id: 2026-02-01_1030_main" # Ablation experiments git tag -a result-ablation-table3 -m \ "Ablation study in Table 3, run_ids: 2026-02-01_14*" # Push tags git push origin --tags -

Write reproduction documentation

In the README or a separate REPRODUCE.md, clearly specify:

-

Environment setup steps (a single command or script)

-

Data preparation steps (download, preprocessing)

-

Commands to reproduce each table/figure

-

Expected runtime and resource requirements

Example:

# Reproduction Instructions ## Environment Setup conda env create -f environment.yaml conda activate research-env ## Data Preparation bash scripts/download_data.sh python scripts/preprocess.py ## Reproduce Main Experiment (Table 2) make reproduce RUN=2026-02-01_1030_main # Expected time: 2 hours (single V100 GPU) # Expected metric: accuracy 94.3% ± 0.5% ## Reproduce Ablation Experiments (Table 3) bash scripts/reproduce_ablation.sh # Expected time: 6 hours (single V100 GPU)

How Teams Use DoD

As a Merge Criterion

In team collaboration, DoD can serve as the threshold for merging code into the main branch:

- An experiment branch must meet the minimum DoD to be merged into main;

- During code review, the reviewer checks against the DoD checklist;

- Code that does not meet DoD cannot be merged and must be completed.

As a Handover Standard

When a project needs to be handed over (e.g., student graduation, team member departure), DoD ensures that knowledge is not lost:

- All important experiments have complete records, so new members can reproduce them;

- Code quality is ensured (tests and documentation);

- Data and models have clear storage and access instructions.

As a Self-Audit Standard

Even for individual projects, DoD helps you avoid “fooling yourself”:

- Regularly (e.g., weekly) check experiments that have not completed DoD and fill in missing records;

- Perform batch checks before writing the paper to avoid last-minute scrambling;

- Once the habit is formed, DoD becomes a natural workflow rather than an extra burden.

Common Obstacles and Solutions

Obstacle 1: “I’m just exploring right now; there’s no need to be so strict.”

Rebuttal: During exploration, you may lower the DoD standard, but you cannot have no standard at all.

Recommended “simplified DoD for the exploration phase”:

- No requirement for multiple-run statistics;

- No requirement for complete ablation studies;

- But must: record commit, config, and seed to ensure it can be rerun.

Once an experiment “seems valuable,” immediately upgrade to the full DoD.

Obstacle 2: “Meeting DoD takes too much time.”

Response: A one-time investment of 30 minutes yields:

- No need for emergency patch-ups during review (saves days);

- A clear baseline for subsequent improvements (saves repeated work);

- No anxiety about “not being reproducible” at submission time (reduces psychological burden).

Practical suggestions:

- Script the DoD checks to reduce manual operations;

- Add checks in Git pre-commit hooks (see Chapter 7);

- With proficiency, DoD will integrate into daily workflows and no longer be an extra cost.

Obstacle 3: “We already have experiment management tools (e.g., MLflow, W&B).”

Response: Tools are great, but DoD is a standard, not a tool.

Tools can help you:

- Automatically record run information (save time);

- Visualize experimental results (easy comparison);

- Store models and artifacts (convenient management).

But tools cannot replace:

-

Your definition of “what counts as done”;

-

Your checks on “whether the evaluation is fair”;

-

Your verification of “whether the code is reproducible”.

Recommendation: Combine the DoD checklist with tooling; for example, record a “DoD compliance status” field in the MLflow run.

10-Minute Action: Perform a DoD Check on the Current Best Result

If you do only one thing right now: perform a complete DoD check on your currently “most promising” experimental result.

-

Find the run_id for this experiment (if it does not exist, create one now)

-

Inspect the run records

# Check whether run.json exists ls outputs/<run_id>/run.json # If not, remediate immediately: # 1. Record the git commit: git log -1 --format="%H" # 2. Record the config file path # 3. Record the seed (if you remember it) # 4. Record the data version (check the data directory or logs) # 5. Record the environment: pip freeze > requirements_<run_id>.txt -

Attempt reproduction

# Switch to the recorded commit git checkout <commit_hash> # Re-run with the recorded config python train.py --config <config_path> --seed <seed> # Check whether the results are within a reasonable range -

Record the check results

Record the following in

outputs/<run_id>/dod_check.md:

-

Are the records complete?

-

Reproducible? (result discrepancy: )

-

Is the evaluation fair?

-

Are there tests?

-

Can the figures be generated?

- If issues are found, fix them immediately

-

Incomplete records: supplement run.json

-

Not reproducible: investigate discrepancies and rerun

-

Unfair evaluation: unify the evaluation script and rerun all comparative experiments

After completing this check, you will have a clear understanding of the credibility of this result. If it passes the DoD, you can confidently include it in the paper; if it does not, it is still early enough to fix it now.

Remember: finding problems early is better than finding them before submission; finding them before submission is better than finding them during review; finding them during review is better than being questioned after publication.

Experiment Logging Automation: What’s Missing Is Not Tools, but Default Behavior

Story Setup: “Archaeological Work” Three Months Later

Your paper has been accepted, but the reviewers request supplementary materials explaining the exact setup of a particular experiment in Table 4. You open the code repository and begin “archaeology”:

Step 1: Find the logs

You remember this experiment was run three months ago. You open the outputs/ directory and see a pile of date-named folders. The problem is that you cannot recall the exact date. You can only open them one by one to check whether the results correspond to that experiment.

Step 2: Find the configuration

You finally locate the result files, but there is no record of the configuration. You comb through the code history, trying to find the hyperparameters used at the time. In one commit you find a configuration that seems plausible, but you are not sure whether it was the final version-you remember temporarily changing the learning rate, but you do not remember what you changed it to.

Step 3: Find the data

The data path in the code is data/v2/, but your current data directory is data/v3/. You do not remember whether you switched dataset versions back then. You search your chat history for “data,” trying to find clues.

Step 4: Give up

After an entire afternoon of struggle, you decide to rerun the experiment. However, because the parameters are uncertain, the rerun results do not match what was reported in the paper. In the supplementary materials you can only write: “Due to the long time elapsed, some experimental details may be inaccurate.”

Reviewer’s reply: “We cannot accept a paper where the authors cannot reproduce their own results.”

This tragedy could have been avoided.

If you had spent 2 minutes recording key information at the end of the experiment, you would not have faced a nightmare three months later.

The issue is not a lack of tools (MLflow, W&B, and TensorBoard are all excellent), but rather the absence of logging as a default behavior: many people think, “This is just a quick try; no need to record it,” and then they keep trying and forget to log. In the end, even valuable experiments leave no trace.

A Two-Layer Logging Strategy: Machine-Precise + Human-Concise

The core challenge of experiment logging is balancing two needs:

-

Machines require complete and precise information (for reproducibility and automated analysis);

-